2101: chore(all): update actix-web dependency to 4.0.0-beta.21 r=MarinPostma a=robjtede

# Pull Request

## What does this PR do?

I don't expect any more breaking changes to Actix Web that will affect Meilisearch so bump to latest beta.

Fixes #N/A?

<!-- Please link the issue you're trying to fix with this PR, if none then please create an issue first. -->

## PR checklist

Please check if your PR fulfills the following requirements:

- [ ] Does this PR fix an existing issue?

- [x] Have you read the contributing guidelines?

- [x] Have you made sure that the title is accurate and descriptive of the changes?

Thank you so much for contributing to MeiliSearch!

Co-authored-by: Rob Ede <robjtede@icloud.com>

2095: feat(error): Update the error message when you have no version file r=MarinPostma a=irevoire

Following this [issue](https://github.com/meilisearch/meilisearch-kubernetes/issues/95) we decided to change the error message from:

```

Version file is missing or the previous MeiliSearch engine version was below 0.24.0. Use a dump to update MeiliSearch.

```

to

```

Version file is missing or the previous MeiliSearch engine version was below 0.25.0. Use a dump to update MeiliSearch.

```

Co-authored-by: Tamo <tamo@meilisearch.com>

2075: Allow payloads with no documents r=irevoire a=MarinPostma

accept addition with 0 documents.

0 bytes payload are still refused, since they are not valid json/jsonlines/csv anyways...

close#1987

Co-authored-by: mpostma <postma.marin@protonmail.com>

2068: chore(http): migrate from structopt to clap3 r=Kerollmops a=MarinPostma

migrate from structopt to clap3

This fix the long lasting issue with flags require a value, such as `--no-analytics` or `--schedule-snapshot`.

All flag arguments now take NO argument, i.e:

`meilisearch --schedule-snapshot true` becomes `meilisearch --schedule-snapshot`

as per https://docs.rs/clap/latest/clap/struct.Arg.html#method.env, the env variable is defines as:

> A false literal is n, no, f, false, off or 0. An absent environment variable will also be considered as false. Anything else will considered as true.

`@gmourier`

`@curquiza`

`@meilisearch/docs-team`

Co-authored-by: mpostma <postma.marin@protonmail.com>

430: Document batch support r=Kerollmops a=MarinPostma

This pr adds support for document batches in milli. It changes the API of the `IndexDocuments` builder by adding a `add_documents` method. The API of the updates is changed a little, with the `UpdateBuilder` being renamed to `IndexerConfig` and being passed to the update builders. This makes it easier to pass around structs that need to access the indexer config, rather that extracting the fields each time. This change impacts many function signatures and simplify them.

The change in not thorough, and may require another PR to propagate to the whole codebase. I restricted to the necessary for this PR.

Co-authored-by: Marin Postma <postma.marin@protonmail.com>

2084: bump milli r=Kerollmops a=irevoire

- Fix https://github.com/meilisearch/MeiliSearch/issues/2082 by updating milli dependency

- Fix Clippy error

- Change the MeiliSearch version in the cargo.toml to anticipate the coming release (v0.25.2)

Co-authored-by: Tamo <tamo@meilisearch.com>

433: fix(filter): Fix two bugs. r=Kerollmops a=irevoire

- Stop lowercasing the field when looking in the field id map

- When a field id does not exist it means there is currently zero

documents containing this field thus we return an empty RoaringBitmap

instead of throwing an internal error

Will fix https://github.com/meilisearch/MeiliSearch/issues/2082 once meilisearch is released

Co-authored-by: Tamo <tamo@meilisearch.com>

426: Fix search highlight for non-unicode chars r=ManyTheFish a=Samyak2

# Pull Request

## What does this PR do?

Fixes https://github.com/meilisearch/MeiliSearch/issues/1480

<!-- Please link the issue you're trying to fix with this PR, if none then please create an issue first. -->

## PR checklist

Please check if your PR fulfills the following requirements:

- [x] Does this PR fix an existing issue?

- [x] Have you read the contributing guidelines?

- [x] Have you made sure that the title is accurate and descriptive of the changes?

## Changes

The `matching_bytes` function takes a `&Token` now and:

- gets the number of bytes to highlight (unchanged).

- uses `Token.num_graphemes_from_bytes` to get the number of grapheme clusters to highlight.

In essence, the `matching_bytes` function now returns the number of matching grapheme clusters instead of bytes.

Added proper highlighting in the HTTP UI:

- requires dependency on `unicode-segmentation` to extract grapheme clusters from tokens

- `<mark>` tag is put around only the matched part

- before this change, the entire word was highlighted even if only a part of it matched

## Questions

Since `matching_bytes` does not return number of bytes but grapheme clusters, should it be renamed to something like `matching_chars` or `matching_graphemes`? Will this break the API?

Thank you very much `@ManyTheFish` for helping 😄

Co-authored-by: Samyak S Sarnayak <samyak201@gmail.com>

- Stop lowercasing the field when looking in the field id map

- When a field id does not exist it means there is currently zero

documents containing this field thus we returns an empty RoaringBitmap

instead of throwing an internal error

432: Fuzzer r=Kerollmops a=irevoire

Provide a first way of fuzzing the indexing part of milli.

It depends on [cargo-fuzz](https://rust-fuzz.github.io/book/cargo-fuzz.html)

Co-authored-by: Tamo <tamo@meilisearch.com>

The `matching_bytes` function takes a `&Token` now and:

- gets the number of bytes to highlight (unchanged).

- uses `Token.num_graphemes_from_bytes` to get the number of grapheme

clusters to highlight.

In essence, the `matching_bytes` function returns the number of matching

grapheme clusters instead of bytes. Should this function be renamed

then?

Added proper highlighting in the HTTP UI:

- requires dependency on `unicode-segmentation` to extract grapheme

clusters from tokens

- `<mark>` tag is put around only the matched part

- before this change, the entire word was highlighted even if only a

part of it matched

2076: fix(dump): Fix the import of dump from the v24 and before r=ManyTheFish a=irevoire

Same as https://github.com/meilisearch/MeiliSearch/pull/2073 but on main this time

Co-authored-by: Irevoire <tamo@meilisearch.com>

2066: bug(http): fix task duration r=MarinPostma a=MarinPostma

`@gmourier` found that the duration in the task view was not computed correctly, this pr fixes it.

`@curquiza,` I let you decide if we need to make a hotfix out of this or wait for the next release. This is not breaking.

Co-authored-by: mpostma <postma.marin@protonmail.com>

2057: fix(dump): Uncompress the dump IN the data.ms r=irevoire a=irevoire

When loading a dump with docker, we had two problems.

After creating a tempdirectory, uncompressing and re-indexing the dump:

1. We try to `move` the new “data.ms” onto the currently present

one. The problem is that if the `data.ms` is a mount point because

that's what peoples do with docker usually. We can't override

a mount point, and thus we were throwing an error.

2. The tempdir is created in `/tmp`, which is usually quite small AND may not

be on the same partition as the `data.ms`. This means when we tried to move

the dump over the `data.ms`, it was also failing because we can't move data

between two partitions.

------------------

1 was fixed by deleting the *content* of the `data.ms` and moving the *content*

of the tempdir *inside* the `data.ms`. If someone tries to create volumes inside

the `data.ms` that's his problem, not ours.

2 was fixed by creating the tempdir *inside* of the `data.ms`. If a user mounted

its `data.ms` on a large partition, there is no reason he could not load a big

dump because his `/tmp` was too small. This solves the issue; now the dump is

extracted and indexed on the same partition the `data.ms` will lay.

fix#1833

Co-authored-by: Tamo <tamo@meilisearch.com>

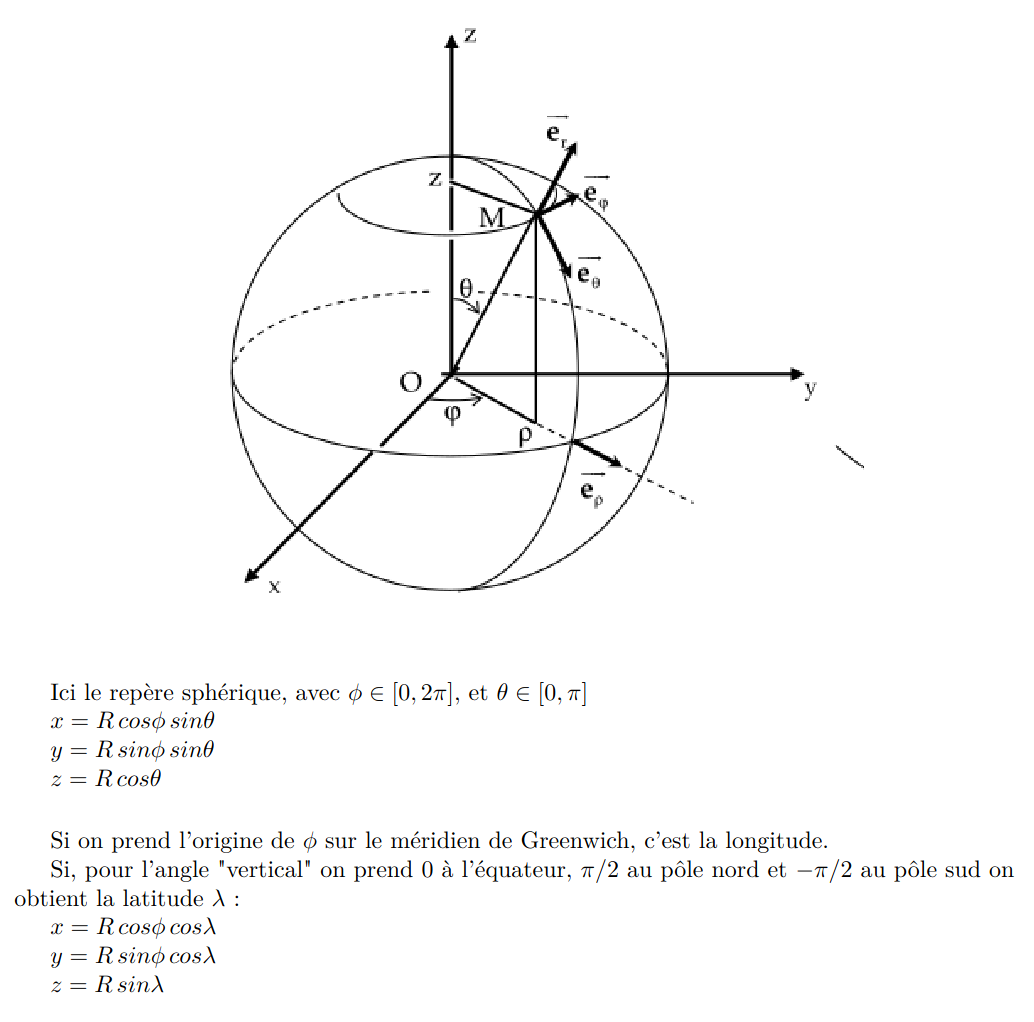

424: Store the geopoint in three dimensions r=Kerollmops a=irevoire

Related to this issue: https://github.com/meilisearch/MeiliSearch/issues/1872

Fix the whole computation of distance for any “geo” operations (sort or filter). Now when you sort points they are returned to you in the right order.

And when you filter on a specific radius you only get points included in the radius.

This PR changes the way we store the geo points in the RTree.

Instead of considering the latitude and longitude as orthogonal coordinates, we convert them to real orthogonal coordinates projected on a sphere with a radius of 1.

This is the conversion formulae.

Which, in rust, translate to this function:

```rust

pub fn lat_lng_to_xyz(coord: &[f64; 2]) -> [f64; 3] {

let [lat, lng] = coord.map(|f| f.to_radians());

let x = lat.cos() * lng.cos();

let y = lat.cos() * lng.sin();

let z = lat.sin();

[x, y, z]

}

```

Storing the points on a sphere is easier / faster to compute than storing the point on an approximation of the real earth shape.

But when we need to compute the distance between two points we still need to use the haversine distance which works with latitude and longitude.

So, to do the fewest search-time computation possible I'm now associating every point with its `DocId` and its lat/lng.

Co-authored-by: Tamo <tamo@meilisearch.com>

2060: chore(all) set rust edition to 2021 r=MarinPostma a=MarinPostma

set the rust edition for the project to 2021

this make the MSRV to v1.56

#2058

Co-authored-by: Marin Postma <postma.marin@protonmail.com>